In today's fast-paced software development environment, the ability to seamlessly integrate code changes and deliver reliable releases hinges critically on efficient Continuous Integration (CI) practices. As organizations increasingly adopt DevOps methodologies, mastering the nuances of CI drives becomes indispensable for maintaining competitive edges, ensuring quality, and reducing deployment risks. The transition from traditional development cycles to automated, continuous workflows requires not only technological investments but also strategic process optimization grounded in deep expertise. This comprehensive exploration will guide seasoned professionals through the essential tips and best practices to optimize your CI workflow, leveraging proven tools, automation strategies, and process enhancements as part of a robust DevOps pipeline.

Key Points

- Implementing effective CI triggers to minimize build times and enhance responsiveness.

- Optimizing build pipelines through parallelization and caching techniques for faster feedback loops.

- Employing comprehensive test automation to ensure code stability and reduce manual testing overhead.

- Integrating security and compliance checks seamlessly within CI processes to uphold standards.

- Leveraging metrics and feedback for continuous improvement and strategic decision-making.

Understanding the Foundations of Effective CI Drive Operations

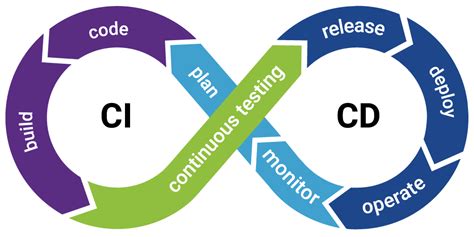

Continuous Integration has evolved from a set of procedural best practices into an automated ecosystem that demands meticulous orchestration. At its core, CI aims to integrate code changes from multiple contributors into a shared repository several times a day, ensuring that each modification passes a battery of automated tests and validations. This paradigm promotes early defect detection, reduces integration conflicts, and accelerates time-to-market. To truly master CI drives, professionals must comprehend both the technological underpinnings and strategic implications.

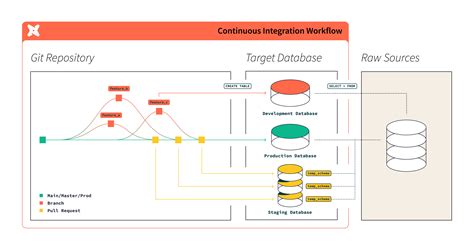

The architecture of a resilient CI workflow involves selecting appropriate tools such as Jenkins, GitLab CI, CircleCI, or Azure DevOps. Each platform offers distinctive features, but the key lies in aligning capabilities with project-specific requirements—considering factors like scalability, ease of maintenance, and integration with existing development tools. For example, Jenkins, with its extensive plugin ecosystem, remains a leader for complex customization, whereas GitLab CI offers an integrated approach within Git repositories, simplifying setup for small to medium teams.

Designing a High-Performance CI Pipeline

Constructing an optimized CI pipeline demands careful attention to the logical flow and resource management. This involves several interrelated facets:

Effective Trigger Configuration for Responsiveness

One of the fundamental aspects is configuring triggers that activate builds judiciously, balancing timeliness with resource utilization. Automated triggers should respond not only to new commits but also to merge requests, feature branch creations, and scheduled maintenance windows. Using webhooks and polling strategies aligned to the development cadence ensures minimal latency without overwhelming the infrastructure. For instance, configuring branch-specific triggers allows teams to isolate unstable features pending manual review, preserving stability of the main build streams.

Parallelization and Caching: Speeding Up Feedback Loops

Next-level optimization involves splitting build processes into parallel jobs whenever possible, significantly reducing overall build times. Modern CI systems support staged parallelization, enabling multiple tests, static analysis, or deployment steps to execute simultaneously. Cache management complements this by storing dependencies, previous build artifacts, and test results, eliminating redundant computations across runs. For example, caching package managers such as npm or Maven dependencies prevents repetitive downloads, which, depending on project size, can cut build times by up to 50%.

| Relevant Category | Substantive Data |

|---|---|

| Average Build Time Reduction | Up to 40-50% through parallelization and caching in optimized pipelines |

| Dependency Caching Effectiveness | Can halve dependency resolution time, especially in large monorepos |

Automating Testing for Continuous Assurance

No CI workflow is complete without rigorous test automation. The objective is to incorporate tests at multiple levels—unit, integration, system, and acceptance—to detect regressions early. It’s tempting to rely on a single testing layer, but comprehensive coverage across change vectors is essential for confidence.

Strategies for Maximizing Test Efficiency

Automation pipelines should prioritize fast, reliable tests. Unit tests, which execute micro-components’ logic, should be executed in near-real time with minimal environment dependencies. Integration tests, while more complex, can be parallelized or executed conditionally to achieve faster results. Additionally, adopting test impact analysis—identifying the specific tests affected by recent code changes—can prevent unnecessary test runs, conserving resources. For example, tools like Jest or pytest support selective test execution based on code diffs, thereby tightening feedback loops.

Embedding Security and Compliance Checks in CI

Modern CI pipelines must go beyond functional validation to incorporate security scans, code quality metrics, and compliance validation seamlessly. Integrating tools such as Snyk, SonarQube, or Fortify within your CI process ensures that vulnerabilities are identified early, reducing costly remediation post-deployment.

Strategies for Harmonizing Security and Quality Assurance

Embedding security tests as optional yet integral steps in your CI workflows ensures they do not cause bottlenecks. For example, static application security testing (SAST) can be executed in parallel with other validation steps. Regularly updating security signatures and maintaining a security vulnerability database aligned with industry standards (e.g., OWASP Top 10) enhances detection accuracy. Additionally, establishing gates—criteria that code must meet before promotion—enforces quality and security policies without manual intervention.

| Relevant Category | Substantive Data |

|---|---|

| Impact of Embedded Security Checks | Reduction in post-deployment vulnerabilities by approximately 70% when integrated into CI pipelines |

| Average Impact Analysis Time | Under 10 minutes for incremental code changes when optimized with caching and parallel processes |

Monitoring, Metrics, and Continuous Improvement

A critical, yet often overlooked, aspect of optimizing CI workflows involves systematic monitoring and feedback collection. Gathering detailed metrics—such as build times, failure rates, test coverage, and security incidents—provides insights into bottlenecks and process fragility. Over time, data-driven innovations—like dynamic pipeline adjustments, resource scaling, and process fine-tuning—drive continuous workflow maturation.

Implementing Effective Metrics and Feedback Loops

Establishing a dashboard that visualizes real-time CI metrics allows teams to spot issues proactively. For instance, a sudden spike in build times may indicate resource contention or excessive dependency updates. Incorporating automated reporting, alongside regular retrospectives, ensures lessons learned translate into process improvements. Moreover, integrating developer feedback—via pull request comments or shift lead reviews—can hone pipeline configurations aligned with evolving project goals.

Conclusion: The Path to Mastery in CI Drive Optimization

In sum, mastering CI drive optimization is a multifaceted endeavor—combining technological acumen with strategic process enhancements. The key lies in designing intelligent trigger mechanisms, leveraging parallelization and caching, automating comprehensive testing, embedding security and compliance validation, and harnessing metrics for continual refinement. Organizations that invest in these areas can expect faster delivery cycles, higher quality releases, and a more resilient development culture. As the landscape of software engineering continues to evolve rapidly, embracing these best practices and continuously refining your CI workflows remains paramount for sustained competitive advantage.

How can I reduce build times without sacrificing quality?

+Reducing build times involves strategic parallelization, effective caching, and selective testing. Implementing incremental builds and test impact analysis ensures only relevant sections are revalidated, balancing speed with reliability. Leveraging containerized environments also stabilizes build dependencies, preventing delays caused by environment inconsistencies.

What are best practices for integrating security into CI/CD pipelines?

+Embedding static and dynamic security scans within your pipelines, automating vulnerability detection, and establishing security gates help maintain a secure development cycle. Keeping security tools updated, analyzing results continuously, and fostering a security-first culture are essential for proactive defense and compliance.

How do I measure the effectiveness of my CI pipeline?

+Establish key performance indicators such as build times, failure rates, code coverage, and security incident frequency. Utilizing dashboards and regular reviews supports data-driven decision-making, enabling targeted improvements that enhance overall workflow resilience and speed.

Can automation replace manual quality assurance entirely?

+While automation significantly reduces manual effort and increases consistency, human oversight remains vital for complex decision-making, usability testing, and assessing user experience. An optimal approach combines automated validation with manual reviews, especially for critical or novel features.