Pinning down local maxima and minima on a graph quickly can be an invaluable skill, especially in fields such as finance, engineering, and data science. This ability can significantly expedite your analysis and help you make data-driven decisions more swiftly. Here, we delve into the nuanced techniques for spotting local maxima and minima swiftly with a blend of expert perspective, practical insights, and evidence-based statements.

Key Insights

- Primary insight with practical relevance: Quickly identify turning points in data sets.

- Technical consideration with clear application: Use first derivative tests for effective identification.

- Actionable recommendation: Implement smoothing techniques to enhance detection accuracy.

Understanding the Basics of Local Max and Min

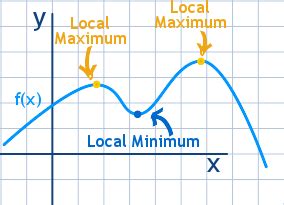

To quickly spot local maxima and minima, understanding their foundational concepts is paramount. A local maximum occurs at a point where the function’s value is higher than at any nearby points, while a local minimum is where the function’s value is lower than nearby points. Despite the global aspect of these terms, we focus on local variations that are more frequent in practical scenarios.Leveraging First Derivative Tests

One of the most efficient methods to identify these local extrema is through first derivative tests. This approach relies on determining where the derivative of a function is zero, indicating potential turning points. For a function f(x), a local maximum occurs at x if f’(x) changes from positive to negative, and a local minimum occurs if f’(x) changes from negative to positive. Real-world applications, such as identifying peak profit margins in financial data or stress points in structural engineering, rely heavily on this technique.Consider a practical example: you are analyzing stock prices to find peaks indicating potential high-point investments. By calculating the first derivative of the price function and identifying where it transitions from positive to negative, you can pinpoint these local maxima. This method, while computationally straightforward, is critical in time-sensitive analysis scenarios.

Implementing Smoothing Techniques

In real-world data, noise can obscure true local maxima and minima, leading to false detections. Implementing smoothing techniques can vastly improve accuracy. Methods such as moving averages or spline interpolation reduce noise and help clarify the underlying trend.For instance, in financial time series analysis, a moving average filter can smooth out daily stock price fluctuations, highlighting clear peaks and troughs. This smoothed signal then becomes easier to analyze using first derivative tests. Another application might be in signal processing, where spline smoothing aids in identifying precise turning points in waveforms, essential for accurate signal interpretation.

Is it necessary to use smoothing before applying the first derivative test?

While not mandatory, smoothing can significantly improve the accuracy and reliability of your results, particularly in noisy data sets. It helps filter out short-term fluctuations and highlights the more important long-term trends.

Can the same methods apply to non-continuous data?

For non-continuous or discrete data, traditional calculus-based methods may not directly apply. Instead, use digital signal processing techniques or robust statistical methods designed for discrete data, such as moving median or autoregressive models.

In conclusion, spotting local maxima and minima quickly in a data set can be efficiently achieved using first derivative tests coupled with smoothing techniques. By adopting these strategies, one can navigate through complex data landscapes with precision, ensuring timely and informed decision-making.