Is Zero a Natural Number Unraveled: A Math Debate You Need to Know

In mathematics, debates often arise over fundamental definitions and concepts. One such debate that many are curious about is whether zero should be classified as a natural number. This discussion is not just an abstract academic exercise but can deeply affect how you understand numbers, mathematical operations, and even real-world applications. Let’s dive into this intriguing question with practical insights and examples to clarify any confusion.

Problem-Solution Opening Addressing User Needs

Many people encounter confusion about the classification of zero when they study mathematics. This confusion can lead to missteps in both basic and advanced mathematical applications. The definition of natural numbers varies in different mathematical frameworks, and zero’s classification can often be misunderstood. This guide aims to clarify the debate on whether zero is a natural number by providing practical examples, actionable insights, and expert explanations. We will break down the arguments from different mathematical perspectives, discuss the practical implications, and offer a resolution that aligns with commonly accepted conventions in mathematics. Whether you’re a student, a teacher, or just someone who wants to understand this fundamental math debate, this guide is for you.

Quick Reference

Quick Reference

- Immediate action item: Familiarize yourself with the different definitions of natural numbers.

- Essential tip: Understand the traditional definition of natural numbers in basic math as the set {1, 2, 3,…}.

- Common mistake to avoid: Assuming that all mathematical fields use the same definition of natural numbers without considering the context.

Understanding Natural Numbers: Definitions and Debate

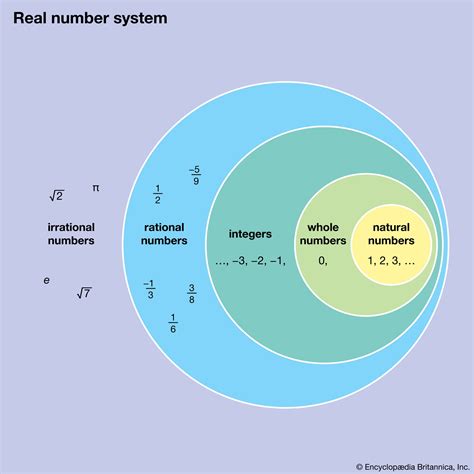

To understand the debate over whether zero is a natural number, it’s essential to explore the different definitions of natural numbers. Traditionally, natural numbers have been defined as the set of positive integers starting from 1: {1, 2, 3, 4,…}. This definition is widely accepted in basic arithmetic and elementary education. However, as mathematics advances, the definition sometimes expands to include zero, particularly in more advanced contexts like set theory and certain branches of mathematics.

Let’s look at two major perspectives on the classification of natural numbers:

Traditional Definition

According to the traditional definition, natural numbers begin with 1. This view stems from the fact that counting starts with 1 as we count objects: “one apple,” “two apples,” and so on. Zero is generally not included in this traditional set because it does not represent a count of objects but rather a notion of ‘nothingness’ or a placeholder in numeral systems.

Modern Expanded Definition

In more advanced mathematical fields, natural numbers are often defined as the set {0, 1, 2, 3,…}. This expanded definition is prevalent in areas like set theory, computer science, and combinatorics. Including zero in the set of natural numbers is convenient for certain mathematical structures and theories, as it aligns with the concept of the empty set and facilitates easier mathematical proofs and algorithms.

Detailed How-To: Using Zero in Natural Number Sets

Now that we’ve clarified the definitions, let’s delve into practical applications and implications of both traditional and modern definitions. This section will guide you through understanding when to use each definition and why it’s important for various fields of study.

When to Use the Traditional Definition

The traditional definition is still widely used in basic arithmetic and elementary education. Here’s how to apply this definition in your study or work:

- Elementary Education: When teaching basic counting skills, emphasize that natural numbers begin with 1. For example, in first-grade math, ensure students understand that counting starts with “one.”

- Basic Calculations: Use the traditional definition for simple arithmetic problems where zero isn’t part of the count. For instance, in everyday transactions, money calculations, and basic inventories, treat zero as separate from natural numbers.

- Historical Context: Acknowledge the historical roots of natural numbers in early civilizations, which primarily viewed them as positive integers starting from 1.

Practical example: If you are teaching children to count objects, start from "one apple" and move forward with "two apples," "three apples," etc., thereby not including zero in your count.

When to Use the Modern Expanded Definition

In more advanced mathematics, the expanded definition of natural numbers, including zero, becomes crucial. Here’s how to apply this definition:

- Advanced Mathematics: Use the expanded definition in set theory, combinatorics, and algebra. For instance, when defining functions, algorithms, and sequences, consider zero as part of the natural numbers set.

- Computer Science: In computer programming, zero-based indexing is common. When working with arrays or data structures, view zero as a valid natural number index.

- Mathematical Theories: When developing mathematical theories or proofs, the inclusion of zero in natural numbers can simplify notation and improve coherence.

Practical example: In computer science, when you define an array, use an index starting from 0, considering zero as a valid natural number for the array’s first element.

Practical FAQ

Why does the inclusion of zero in natural numbers matter?

The inclusion of zero in the set of natural numbers can greatly simplify certain mathematical concepts and algorithms. For example, in computer science, treating zero as a natural number aligns with programming practices where indexing often starts at zero. In mathematics, including zero can make the structure of natural numbers more symmetric and coherent. However, in elementary education and basic arithmetic, keeping the traditional definition of starting from 1 helps maintain a clear, simple understanding of counting.

How do different fields treat the classification of natural numbers?

Different fields of study adopt different definitions of natural numbers based on their practical needs. For instance: Elementary Education: Focuses on the traditional definition starting from 1. Advanced Mathematics: Often uses the expanded definition including zero for the sake of consistency and theoretical convenience. Computer Science: Frequently employs zero-based indexing to align with practical programming practices. Statistics: May adopt either definition based on specific contextual needs.

Can zero be considered a whole number?

Yes, zero is considered a whole number. Whole numbers include all non-negative integers, which are {0, 1, 2, 3,…}. This means zero fits within the category of whole numbers because it is neither positive nor negative and is included in most modern numerical sets. Whole numbers are a superset of natural numbers, whether they include zero or not.

Conclusion

The debate over whether zero is a natural number revolves around different mathematical contexts and practical needs. While the traditional definition excludes zero, modern advanced mathematics and computer science often include it for various reasons. Understanding the different definitions and when to apply them is crucial for effective learning and application in diverse fields. By exploring this debate, we gain a deeper appreciation for the flexibility and consistency in mathematical definitions and their practical applications.