Mastering Outer Product Insights: Transformative Power Unveiled

In the ever-evolving landscape of data science and machine learning, understanding the transformative power of mathematical operations like the outer product is crucial for professionals aiming to enhance their analytical capabilities. The outer product emerges as a powerful tool, pivotal in various applications such as tensor computations, image processing, and even neural network design. This article delves deep into the concept of the outer product, elucidating its practical implications and real-world applications, backed by evidence-based insights and real examples.

Key Insights

- The outer product is an essential mathematical operation that can create high-dimensional data representations.

- Its applications span across tensor computations, image processing, and neural network architecture.

- Understanding and utilizing the outer product can enhance the efficiency and effectiveness of data analysis.

Foundational Understanding of the Outer Product

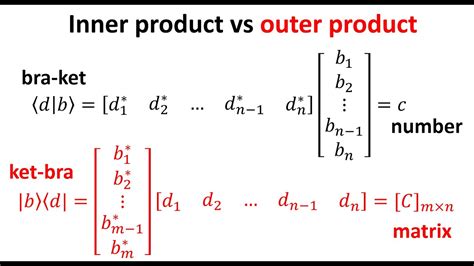

The outer product, also known as the dyadic product, involves the pairwise multiplication of vectors. For vectors a and b where a ∈ ℝ^n and b ∈ ℝ^m, their outer product C is an n × m matrix where each element C_ij = a_i * b_j. This operation generates a matrix that encapsulates the scalar products between elements from each vector. A practical illustration is its use in linear algebra to form outer tensors from vector pairs, facilitating complex data manipulation and processing.Applications in Data Science and Machine Learning

The outer product has profound implications in various fields within data science and machine learning. In tensor computations, it is fundamental for constructing tensors that represent multi-dimensional arrays, essential for deep learning frameworks. For instance, when designing a convolutional neural network (CNN), the outer product assists in the generation of filters and weight matrices crucial for feature extraction.Moreover, in image processing, the outer product enables the transformation and manipulation of image data in high dimensions. Consider an image represented as a matrix A of dimensions m × n. Applying the outer product of vectors from image rows and columns can create a new representation that enhances subsequent processing steps, such as edge detection or pattern recognition. Here, the outer product’s role in creating matrix representations aids in revealing intricate data relationships and features.

How does the outer product differ from the dot product?

The outer product differs fundamentally from the dot product in its operation and outcome. The dot product results in a scalar by summing the pairwise products of two vectors, while the outer product generates a matrix by creating all possible pairwise products between elements of the two vectors. Thus, while the dot product provides a single value representing the vectors' similarity, the outer product produces a matrix encapsulating the vectors' components' interactions.

Can the outer product be used in high-dimensional space?

Absolutely, the outer product is highly versatile and applicable in high-dimensional spaces. In fact, its utility extends to tensor operations where it plays a critical role in constructing multi-dimensional arrays. This makes it invaluable in advanced machine learning applications, including those involving deep learning and large-scale data analysis.

This exploration highlights the outer product’s profound significance in modern data analysis, underscoring its importance in advanced computational fields. The insights provided herein underscore the value of a robust comprehension of the outer product, facilitating its integration into diverse practical scenarios within data science and machine learning applications.